Module 2.0: Exploring the Various Types of Generative Models: Text, Image, Video, Voice and More

©️You are free to adapt and reuse provided (1) that you provide attribution and link to the original work, and (2) you share alike. This course and all of its contents are the property of UseRogue.com and are offered under the Creative Commons BY-SA 4.0 License.

I. Introduction

As we continue to advance in the field of artificial intelligence (AI), many professionals are recognizing the importance of staying updated on the latest developments, particularly in the realm of generative models. Generative models are a type of AI that learn the underlying patterns in data and can generate new examples that mimic the training data. These models are revolutionizing diverse sectors, from entertainment and marketing to healthcare and security.

II. Sources

There is a wide array of models, both open sources and productized, for each of the mediums of generated content and the number growing daily. In general there are a few distinct ways to access these models:

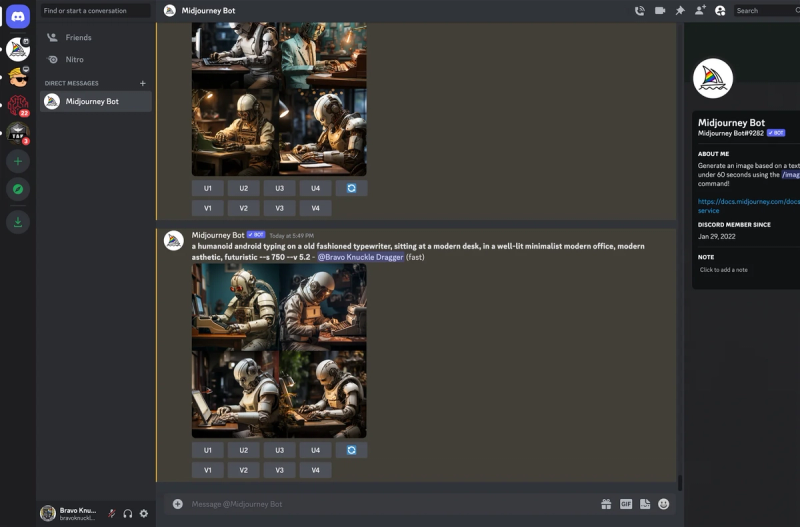

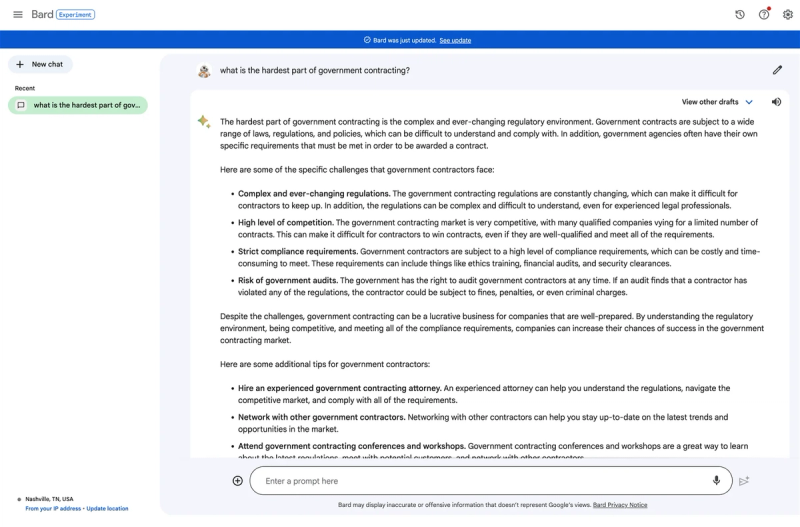

- Through a commercial tool. ChatGPT, Bard, Claude, Edge Browser, Midjourney Discord, etc. These are some of the most user friendly ways to use these models. Midjourney for instance, a great tool for digital art, is only accessible through a discord server.

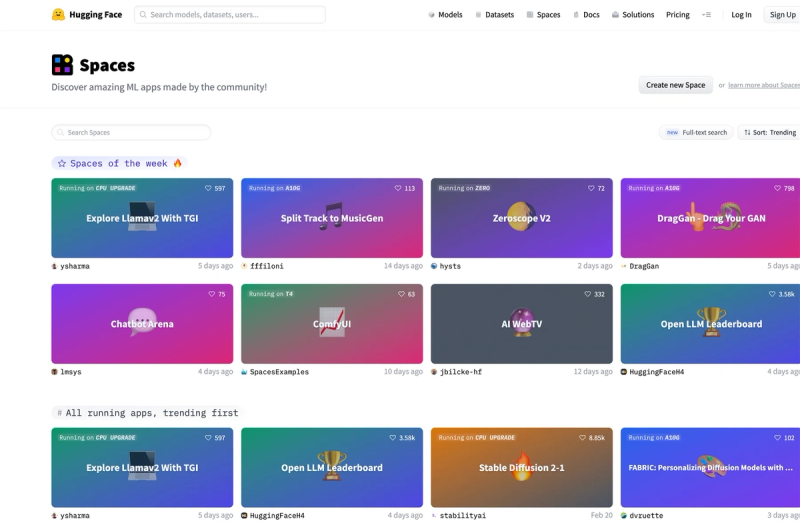

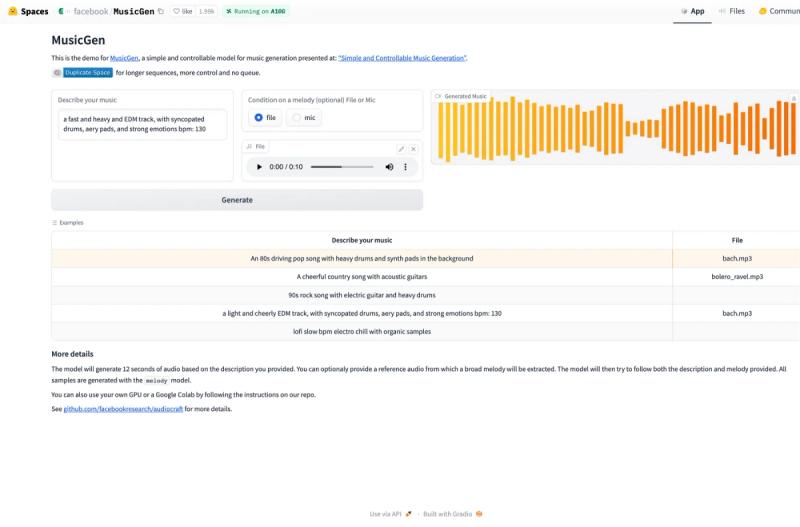

2. Through a model playground. These are available through commercial platforms like OpenAI and open source models on sites like Huggingface. Huggingface is a great place to play around with the latest models as they come out, typically for free.

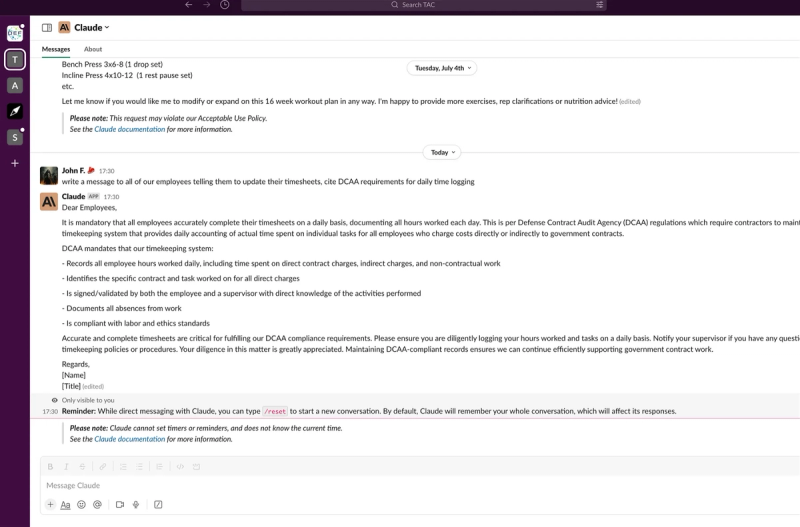

3. Browser extensions and other integrations. There seems to be an AI plugin for everything now, include the OpenAI mobile app, Anthropic plugins for Slack, the list goes on.

4. Through a tool that connects to an Application Programming Interface for one of the models. There are many of these tools now, such as gomoonbeam.com for blog and essay writing.

II. Different Types of Models

- Text Generative Models. Designed to produce human-like text, analyzing a corpus to learn its structure and generate novel sentences, paragraphs or documents. Features include syntax, grammar, thematic consistency, context and creative prompts. Applications include chatbots, content creation, language translation, etc.

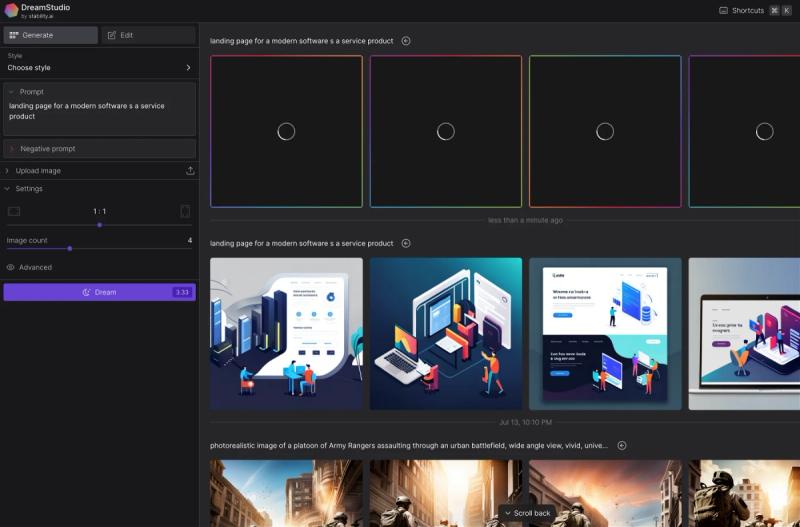

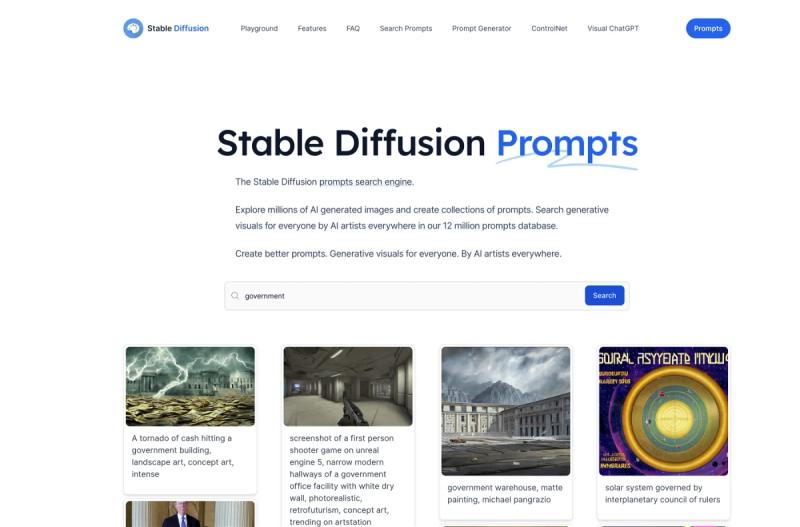

Image Generative Models. Learn patterns from a dataset of images to generate unique images. Capture complex distributions, generate high-quality images and image-to-image translation, but require vast data for training and controlling generated images is challenging. Applications include designing products, creating virtual environments and data augmentation for ML.

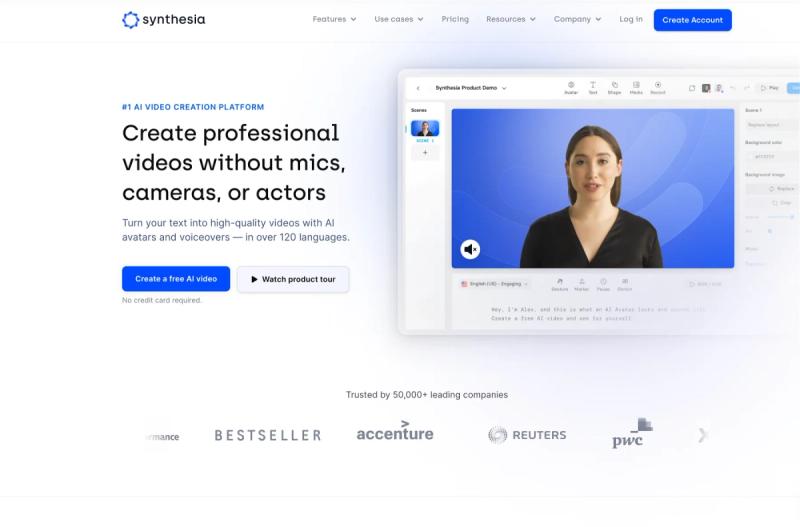

Video Generative Models. Extend image models to time series, generating frames to constitute a video. Handle spatio-temporal data, generate complex sequences, but require significant resources for training. Applications include creating synthetic videos, generating animations and data augmentation for surveillance. To be honest, this stuff is still in the lab. There are more useful tools that don’t use AI like Pictoy.AI that basically converts your text into a series of contextually relevant video snippets with text overlayed. Less cool, but way more functional than the science project below from Facebook.

Voice Generative Models. Trained on audio data to generate novel sequences resembling human speech or music. Capture nuances in pitch, tone, rhythm, but creating high-quality voice outputs is challenging. Applications include speech synthesis, music generation, dubbing in films.

Other Generative Models. Music and 3D object models. Music learn rhythmic and melodic patterns and create compositions, while 3D model generate new shapes from learned representations. Applications include creating music compositions, designing objects for 3D printing and generating virtual reality environments.

Mixed Uses. Using one type of model to aid another, such as text generating prompts for an image or video model or image model creating a text description of a real photo. Combined uses have potential for creating better overall outcomes, but they do require a little more work because you have to be adept at multiple models - which you will be soon

So what?

So there are a bunch of different types of models, what does this all have to do with GovCon?

Ask yourself that question, and start thinking about what it means to you. What are you doing now that could be faster or better with the aide of AI. Writing is an obvious one, there is so much writing in government work that the speed added by a generative text model needs no explaining.

What about the rest?

Lets talk about images. How many PowerPoint decks do build or contribute to? how about desktop publishing for proposals for company branding and advertising.

What about video models though, no one makes videos. What about training content? How many computer based training courses do you have to take that have either static slides or some corny old imagery (looking at you DoD Cyber Awareness Challenge 2.0). Imagine being able to spin up relevant videos for new training content, or adding video to presentations or company branding content. In the past video production was incredibly expensive, now its available to everyone.

III. Practical Exercise

- Check out Hugging Face, don’t be intimidated, we’ll stick to the useful demos like Stable Diffusion 2.1

- Try this prompt and see what you get, play around with the words, see if you can get a combination that you like:

- a picturesque landscape, mountains, river, at sunrise, ultra realistic

- Copy your favorite image and share in the group

- Give feedback below

GovCon GPT Masterclass

31 lessons

Sign up for Rogue today!

Get started with Rogue and experience the best proposal writing tool in the industry.